All Aboard, an app developed by Gang Luo, PhD and his team, uses artificial intelligence to help people who are blind access public transportation.

Think of the last time you used a mobile map app to find bus stop during your travel. Once you arrived at the destination, you could see the sign to pinpoint exactly where to stand for the bus to pull over. However people who have impaired vision lack this ability. What they often do is wait for their bus right where the mobile app indicates they have “arrived” at their destination.

While existing map and wayfinding apps can lead users to the vicinity of a bus stop using GPS technology, they lack the pinpoint accuracy required to get them to their actual stop where the bus sign is located. This gap is known in the mobility rehabilitation community as the “last 10-meter problem,” explained Gang Luo, PhD, an associate scientist at the Schepens Eye Research Institute of Mass Eye and Ear and an associate professor of Harvard Medical School, who led the project.

“This is enough of a distance gap for a bus driver to drive by the stop, not realizing a person who is blind is waiting for the bus,” said Dr. Luo.

This problem can pose significant challenges for the many people with low vision who rely on public transportation, especially in major metropolitan cities.

In an attempt to fill this gap, Dr. Luo and his team of researchers and developers including Shrinivas Pundlik, Anurag Shubham and Eric Jiang, have created a new mobile app called All Aboard powered by artificial intelligence (AI), which aims to change the way people who are blind commute using just their smartphone.

How All Aboard app works

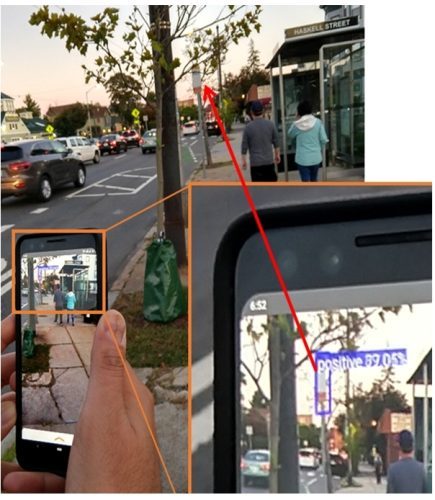

Funded from an AI for Accessibility grant from Microsoft, the iOS version of All Aboard app launched this month in the App Store. The app captures live images from a smartphone camera, and uses a customized AI model to detect bus stop signs and their distance. When a bus stop sign is identified, the app beeps, and the sound progressively gets faster the closer the person gets to the actual bus stop.

[Watch and listen to this video on how the app works]

In practice, bus riders with low vision can first walk to the vicinity of bus stop using their usual GPS navigation apps, and then open up All Aboard to find the precise location of the bus stop. Dr. Luo encourages people with impaired vision to have a loved one or friend with normal sight help get the app set up before using it for the first time.

[Watch and listen to this video on how to set up and use All Aboard]

In designing their app, Dr. Luo’s development team trained an AI-based deep neural network to identify bus stops. They analyzed 5,000 to 10,000 images per city, compiled from nine cities and one country: Greater Boston, Los Angeles, New York City, Washington D.C., San Francisco, Seattle, Toronto, London, Chicago, and Germany.

Real-world testing

In a recent study presented at the 2021 Association for Research in Vision and Ophthalmology (ARVO) Annual Meeting, the app was shown to be highly effective at solving the last 10-meter-problem. In the study, the app was tested at 20 bus stop locations in Los Angeles, including 10 high-density areas near high-rise buildings and 10 low-density areas in the suburbs. A researcher walked under the guidance of auditory clues from All Aboard and Google Maps, starting at about 80 feet away from each stop. The successful navigation rate to the bus stop with All Aboard was 95 percent, compared to 65 percent with Google Maps. All Aboard also was found to have smaller errors in localization.

The early findings point to the promise the app has to offer. According to the researchers, they are planning to evaluate the impact of the app in actual users’ daily life. “We really want to see whether this app can improve quality of life for people who are blind,” said Dr. Luo.

The team also hopes to soon add more cities to its existing list of service regions.

Apps to assist the low-vision population

Dr. Luo and his team have conducted years of research on assistive technology for people with low vision, including mobile apps and a wearable collision warning device. His team previously released three vision apps to the public for free: SuperVision+ Goggles, SuperVision+ Magnifier, and SuperVision Search, the latter which is a smart magnifier that helps users scan for keywords using phone camera, such as specific words on a directory board or product tags on a grocery self. All Aboard is the fourth app released by this group.

Given the ubiquity of smartphones in the population, apps are no longer considered the future for the low-vision community; they are the present.

“We develop apps for people with low vision because nearly everyone has a smartphone at this point,” said Dr. Luo. “This is the quickest way to get helpful assistive technology into the hands of people across the globe.”

In addition to Dr. Luo, the team consists researchers Anurag Shubam, Eric Jiang, Zhicheng Ma, Abhishek Singh, Akash Bobba, SaengMoung Park and Shrinivas Pundlik.

For more information and to download All Aboard, visit the App Store.